Crop yield prediction requires substantial data to train scalable models. However, creating yield prediction

datasets is constrained by high acquisition costs, heterogeneous data quality, and data privacy regulations.

Consequently, existing datasets are scarce, low in quality, or limited to regional levels or single crop types,

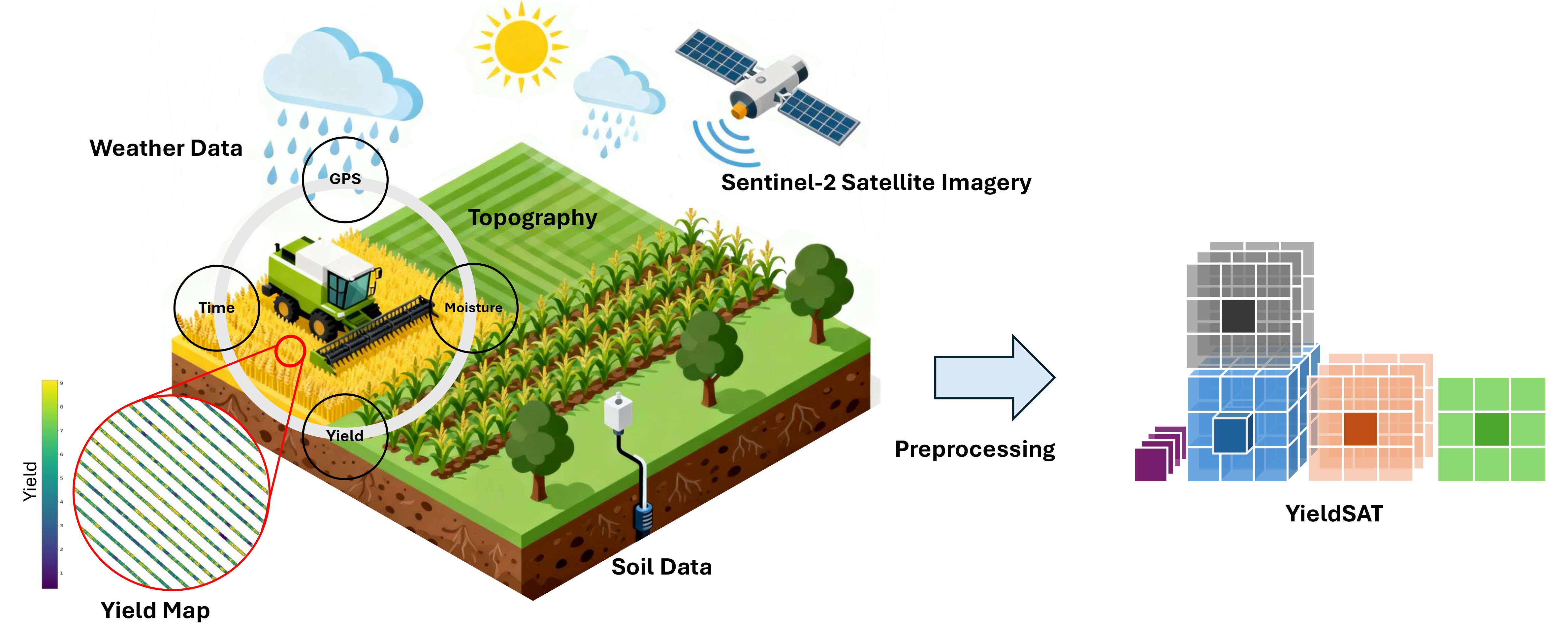

hindering the development of scalable data-driven solutions. In this work, we release YieldSAT,

a large, high-quality, and multimodal dataset for high-resolution crop yield prediction. YieldSAT

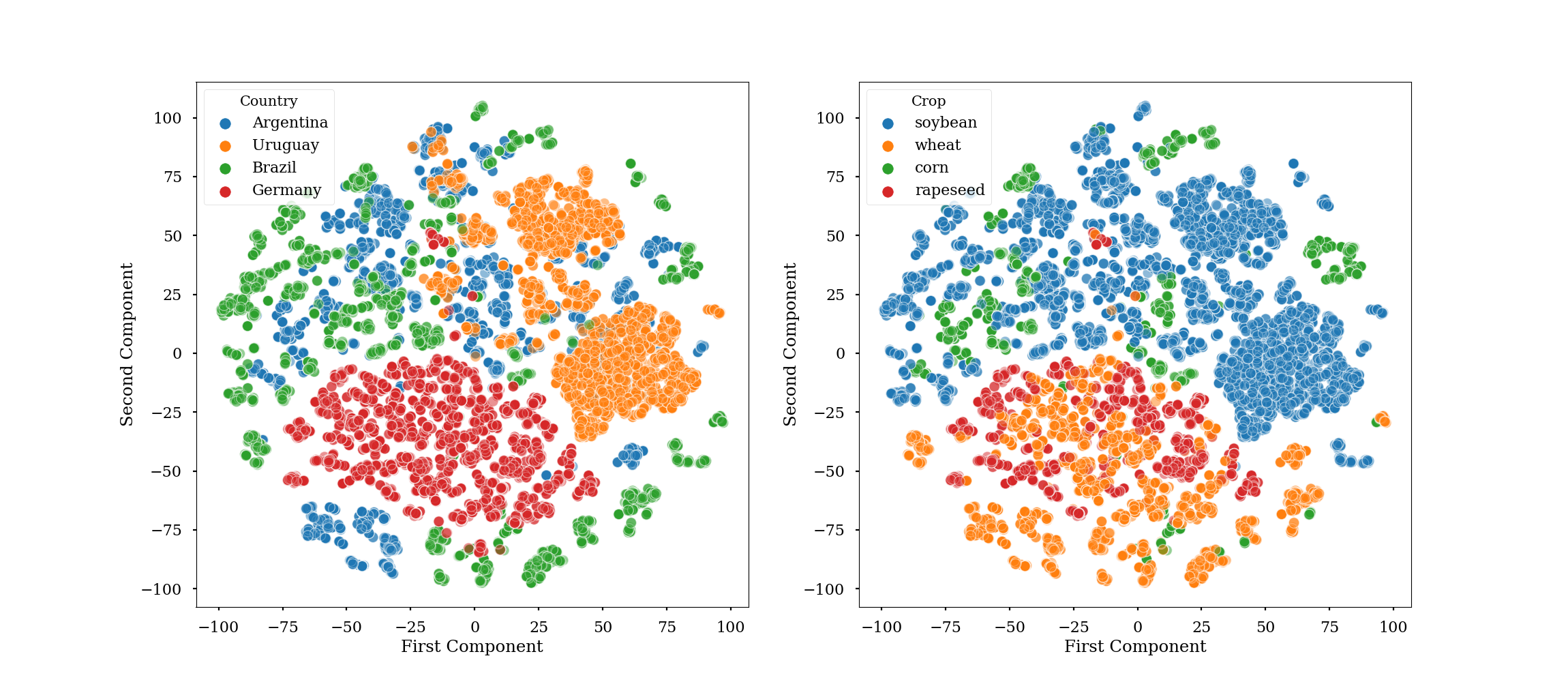

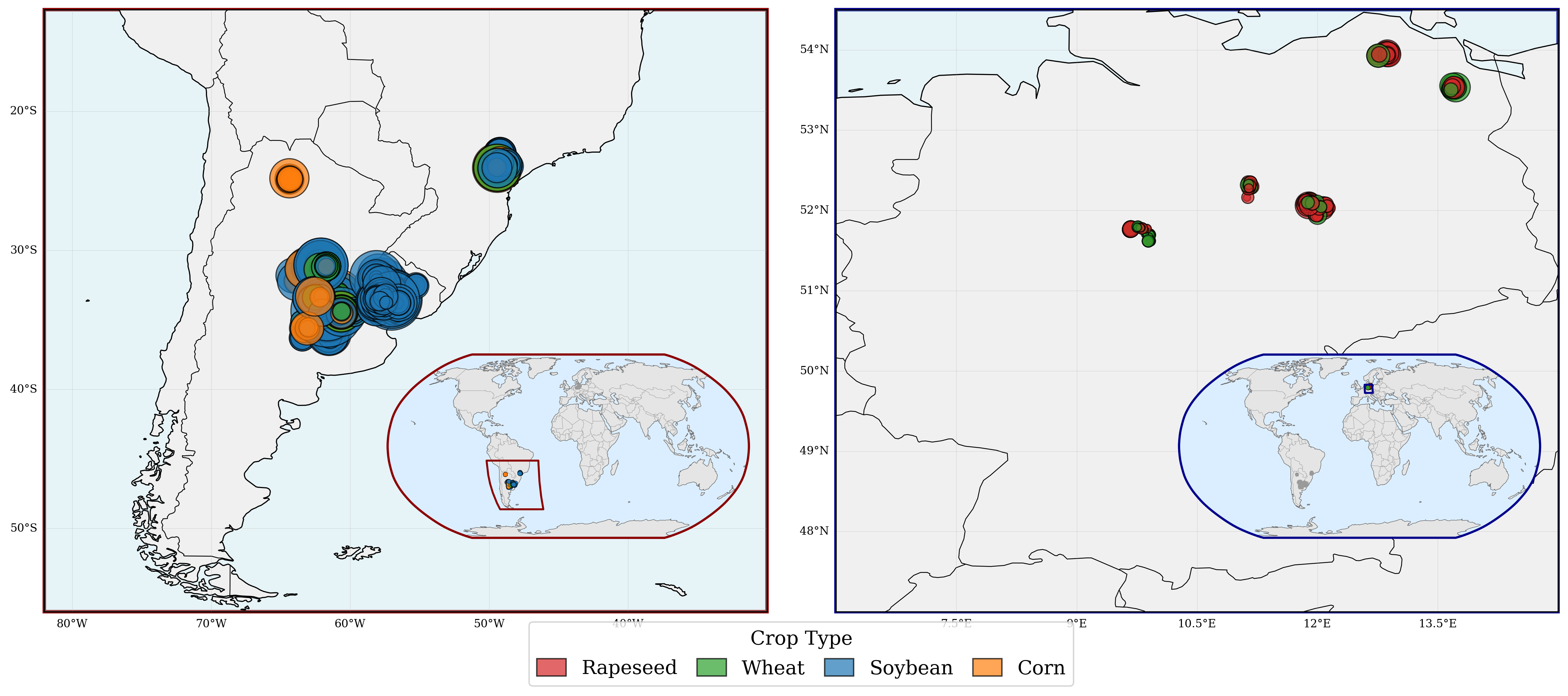

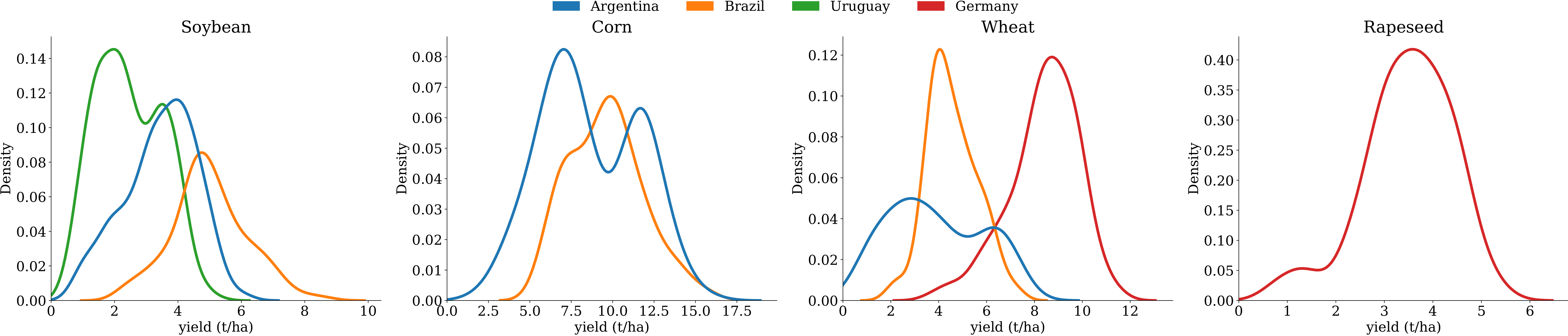

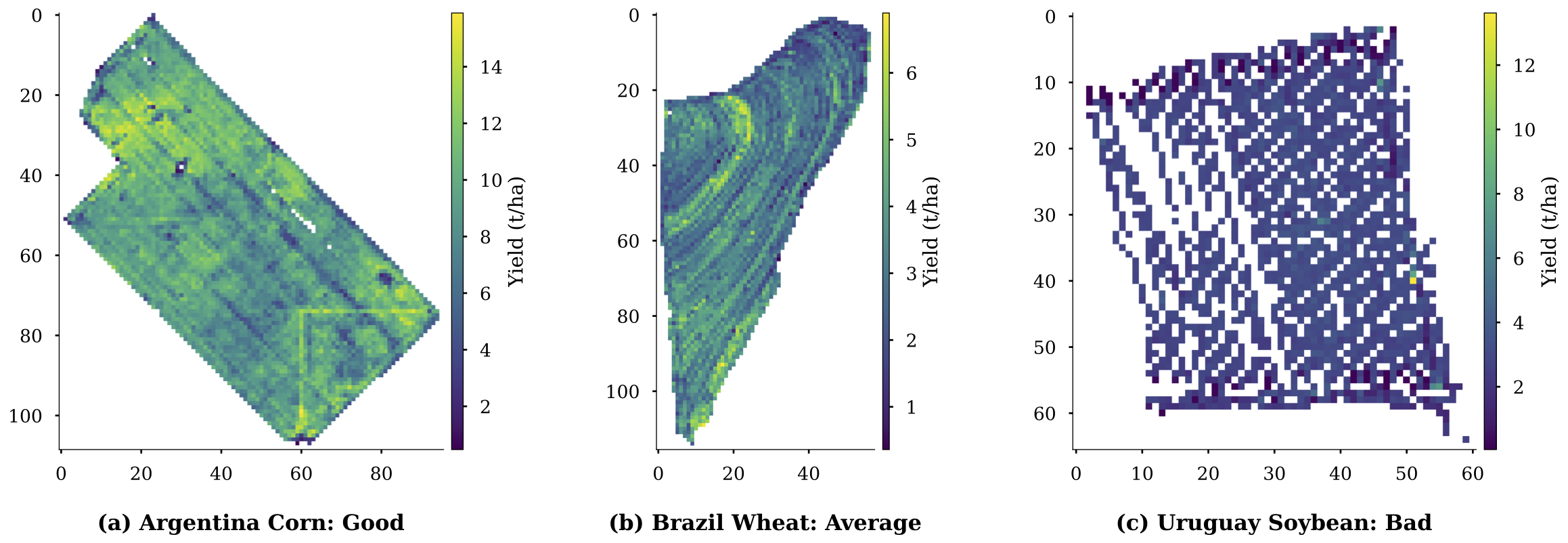

spans various climate zones across multiple countries, including Argentina, Brazil, Uruguay, and Germany, and

includes major crop types, including corn, rapeseed, soybeans, and wheat, across 2,173 expert-curated fields.

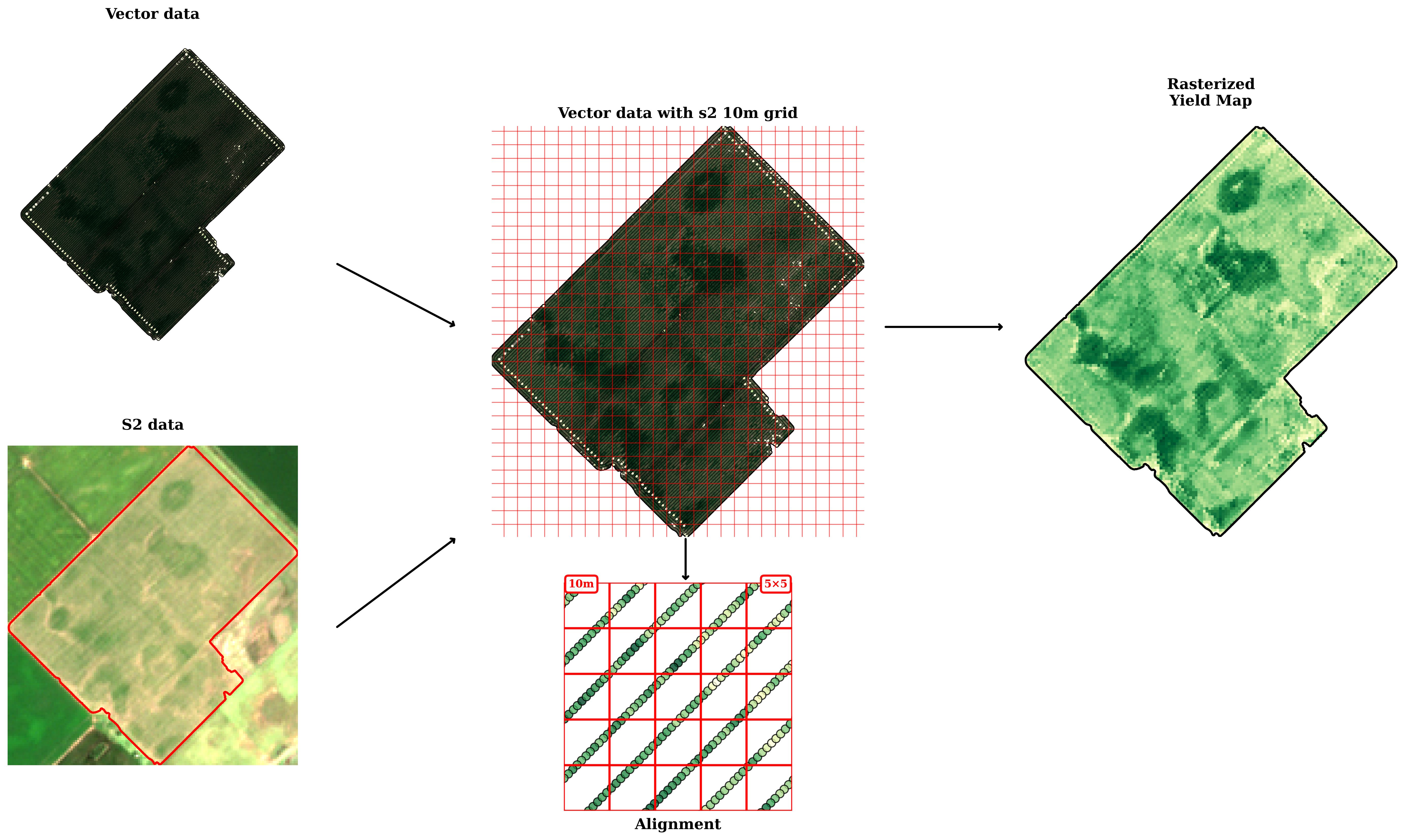

In total, over 12.2 million yield samples are available, each with a spatial resolution of 10 m.

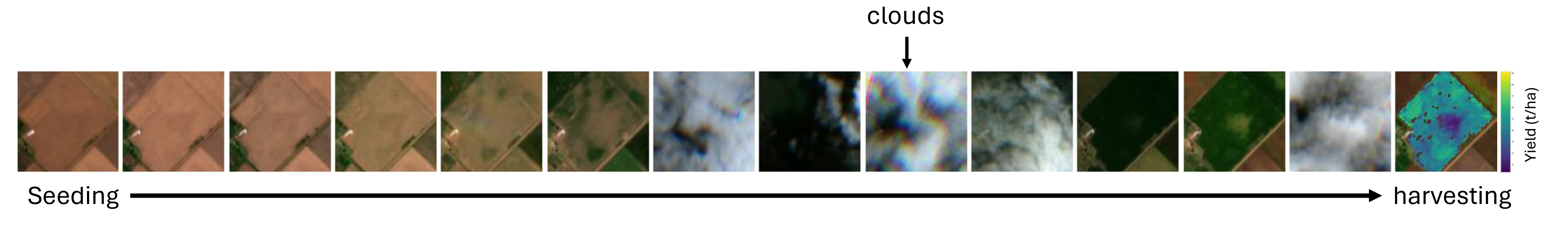

Each field is paired with multispectral satellite imagery, resulting in 113,555 labeled satellite images,

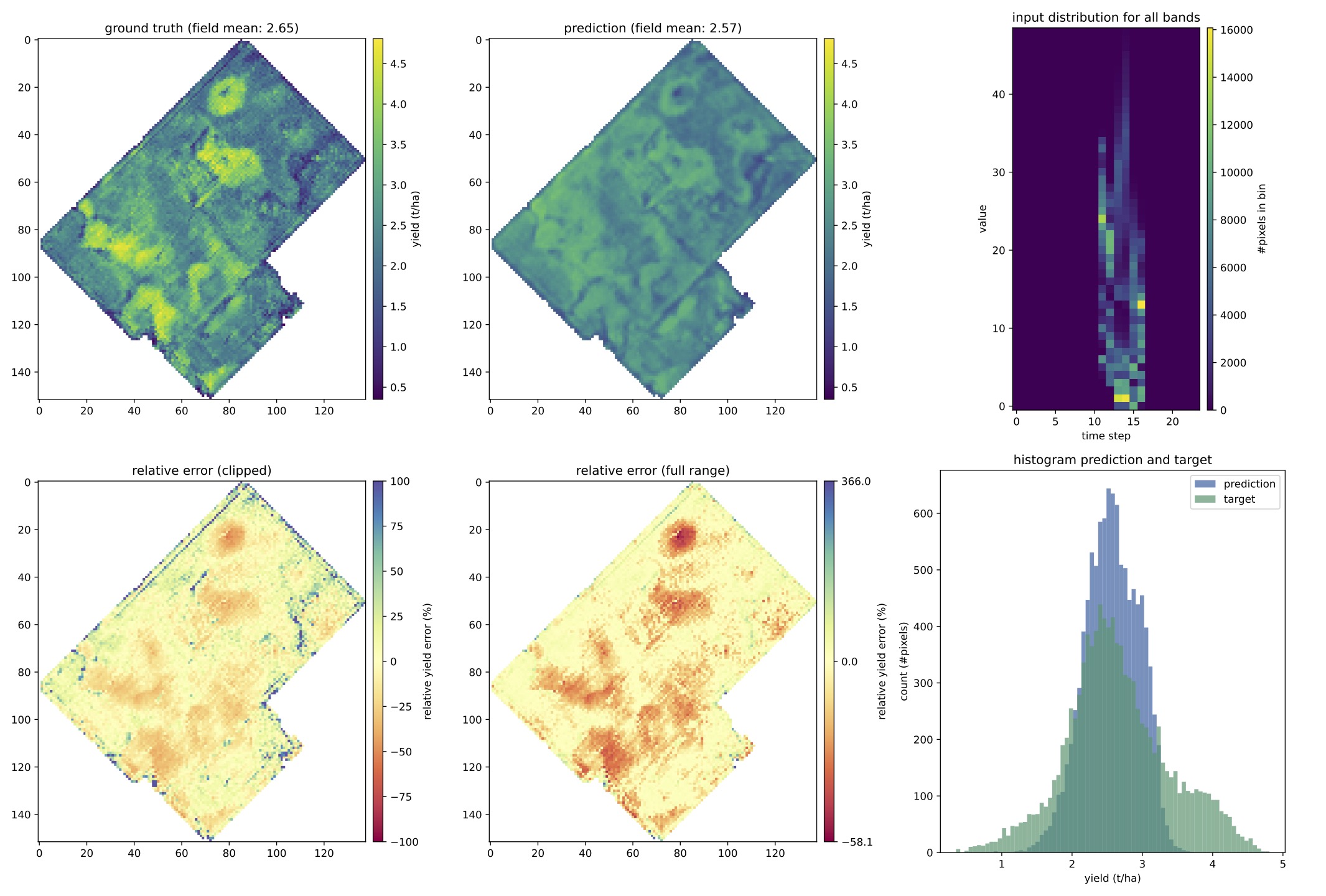

complemented by auxiliary environmental data. We demonstrate the potential of large-scale and high-resolution

crop yield prediction as a pixel regression task by comparing various deep learning models and data fusion

architectures. Furthermore, we highlight open challenges arising from severe distribution shifts in the ground

truth data under real-world conditions. To mitigate this, we explore a domain-informed Deep Ensemble approach

that exhibits significant performance gains.

.png)